Technical Overview

For IT & Architecture Teams

Everything your technical team needs to evaluate Nandu. Architecture, security, deployment, data handling, and integration details.

System Architecture

Multi-agent orchestration, not a prompt wrapper

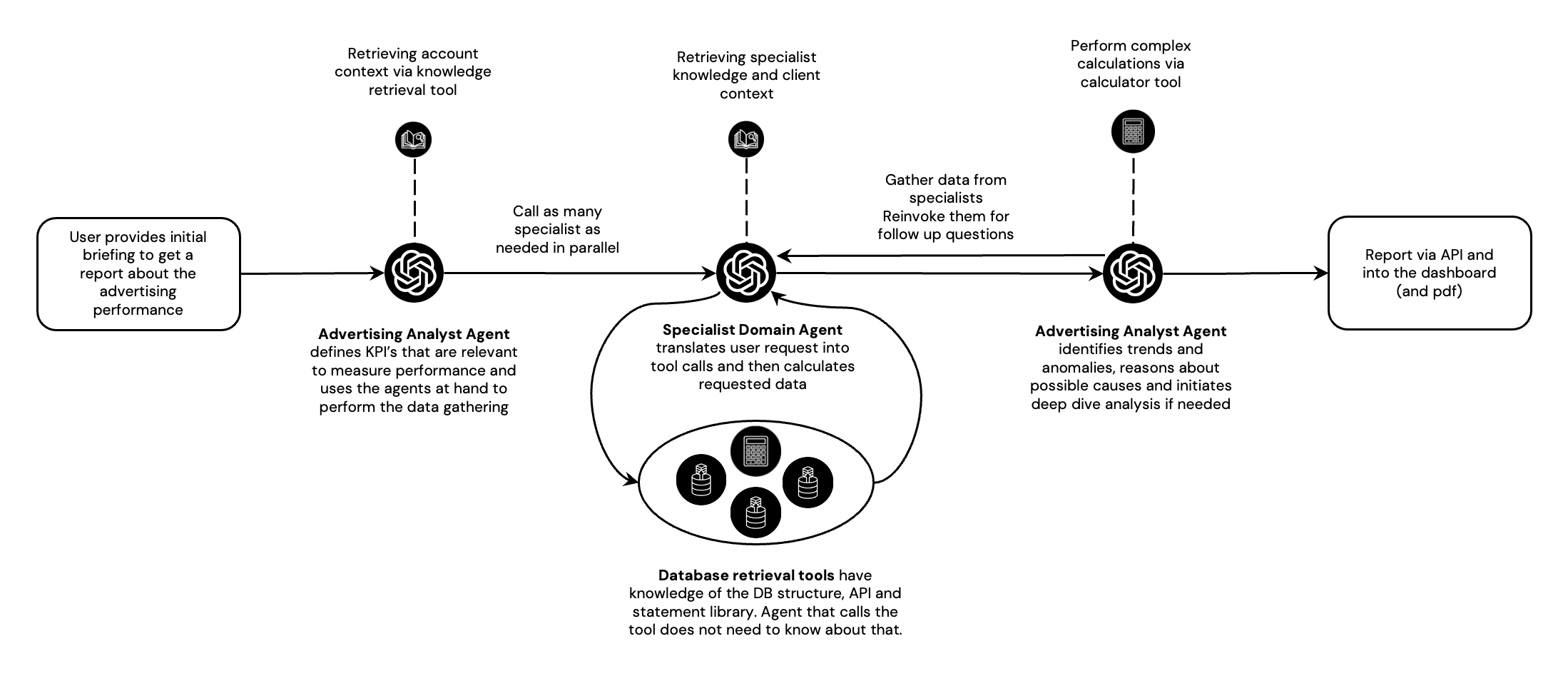

Nandu is built on a LangGraph-based multi-agent architecture where specialized agents handle distinct responsibilities. This is fundamentally different from single-prompt AI tools — each step is auditable, each computation is deterministic, and quality is validated before delivery.

Multi-Agent Orchestration

Nandu uses a LangGraph-based multi-agent architecture. Specialized agents handle orchestration, data retrieval, analysis, and quality assurance as separate, auditable steps.

Deterministic Computation

LLMs are restricted from performing calculations. All computation runs through controlled tools — Python code interpreters and SQL query builders — ensuring reproducible, verifiable results.

Critic Agents & QA

Dedicated critic agents validate every output before delivery. They perform sanity checks, detect anomalies, and trigger reruns when quality thresholds are not met.

Knowledge Layer

Domain expertise, analysis methodologies, and client-specific context are stored as structured knowledge — not embedded in prompts. This enables consistent, auditable reasoning across all analyses.

How the agents work together

When a user asks a question, Ana(the Business Intelligence Manager) plans the analysis: which KPIs are relevant, what data is needed, and what methodology to apply. She loads the client's brand context, business objectives, and any relevant analysis templates from the knowledge base.

Ana then delegates data retrieval to Emma, a specialized agent that constructs SQL queries using deterministic query builders — not LLM-generated SQL. Emma queries the data warehouse (BigQuery, PostgreSQL, or ClickHouse) with per-client credentials, ensuring complete data isolation.

All calculations run through controlled tools (Python code interpreters and SQL aggregation), never through the LLM. This means every number in the output is reproducible and verifiable. Critic agents then validate the results: checking for anomalies, data gaps, and logical consistency before the analysis is delivered.

The result: expert-level analysis with traceable reasoning, deterministic computation, and quality guarantees — not a black box that sometimes gets it right.

Data Handling & Privacy

Your data stays yours — at every layer

Enterprise data security is not a feature we added — it's how the system was designed from day one. Every layer enforces isolation, encryption, and access control.

Client Data Isolation

Each client's data is fully isolated with separate credentials and access controls. No cross-client data access is possible at any layer.

No Training on Your Data

AI models used by Nandu are contractually prohibited from using client data for training. Your data is processed for analysis only.

European Data Residency

All data processing and storage runs on ISO 27001 certified Google Cloud servers within the European Union. No data leaves the EU.

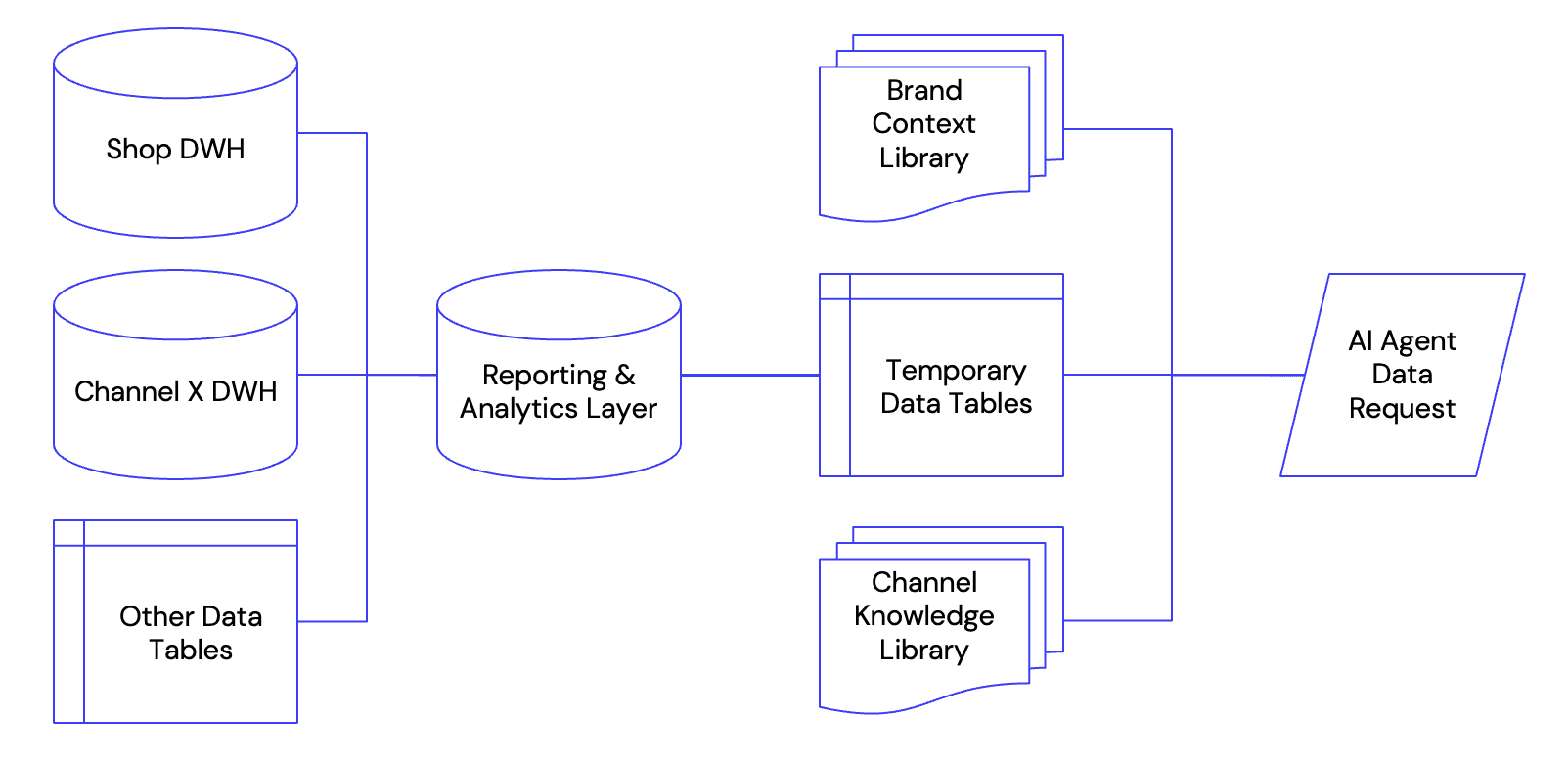

Data Warehouse Flexibility

Nandu connects to your existing DWH (BigQuery, PostgreSQL, ClickHouse, or custom via API/SQL). Alternatively, we provide a managed BigQuery instance with automated pipelines.

Deployment Options

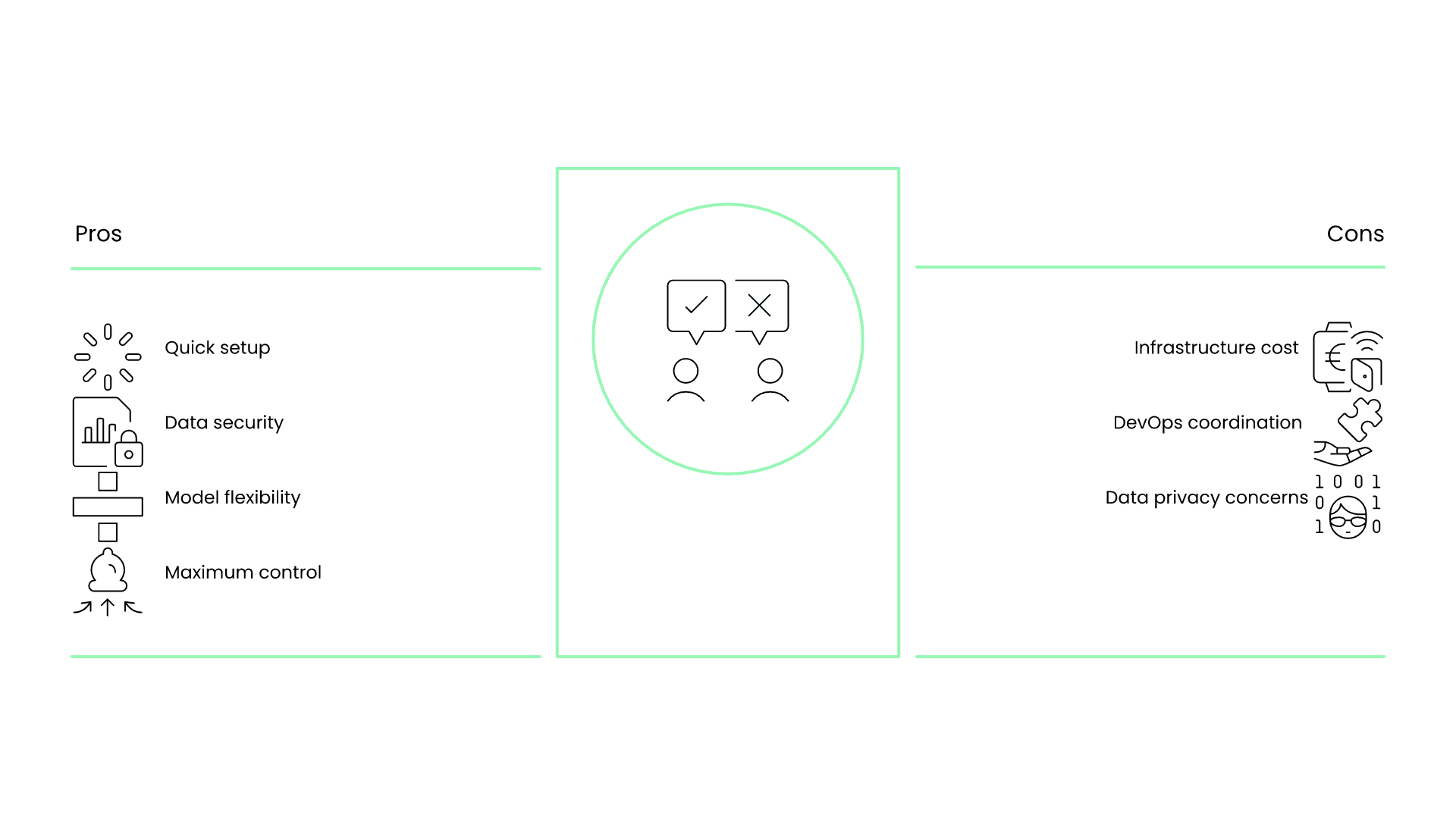

From fully managed to fully on-premise

Choose the deployment model that fits your security requirements and infrastructure strategy. All options deliver the same analytical capabilities.

Nandu Cloud (Managed)

Most popularFully managed SaaS. European hosted, ISO 27001 certified. Connect your data sources and start in days. Zero infrastructure overhead on your side.

Your Data Warehouse

Data stays in your infraNandu agents connect directly to your existing DWH. Our data retrieval layer adapts to your schema and access patterns. Your data never leaves your infrastructure.

Client LLMs

Model flexibilityReplace Nandu's default LLMs with your company's own models (Azure OpenAI, AWS Bedrock, or self-hosted). Agent logic remains the same; only the model endpoint changes.

On-Premise

Maximum controlFull on-premise deployment for maximum control. Nandu runs entirely within your infrastructure. Requires coordination with your DevOps team.

Security & Compliance

Enterprise-grade from day one

Security is not a feature we added — it's how the system was designed. Every layer enforces isolation, encryption, and access control. We provide ISO 27001 certification, GDPR compliance, and contractual guarantees that your data is never used for AI training.

- ISO 27001 certified infrastructure (Google Cloud, EU)

- GDPR compliant — full compliance with European data protection

- SOC 2 Type II on roadmap

- Multi-level authentication via ISO 27001 certified provider (Clerk)

- Role-based access control per organization

- All API communication encrypted via TLS 1.3

- Audit logging for all agent actions and data access

- No client data used for AI model training — contractually guaranteed

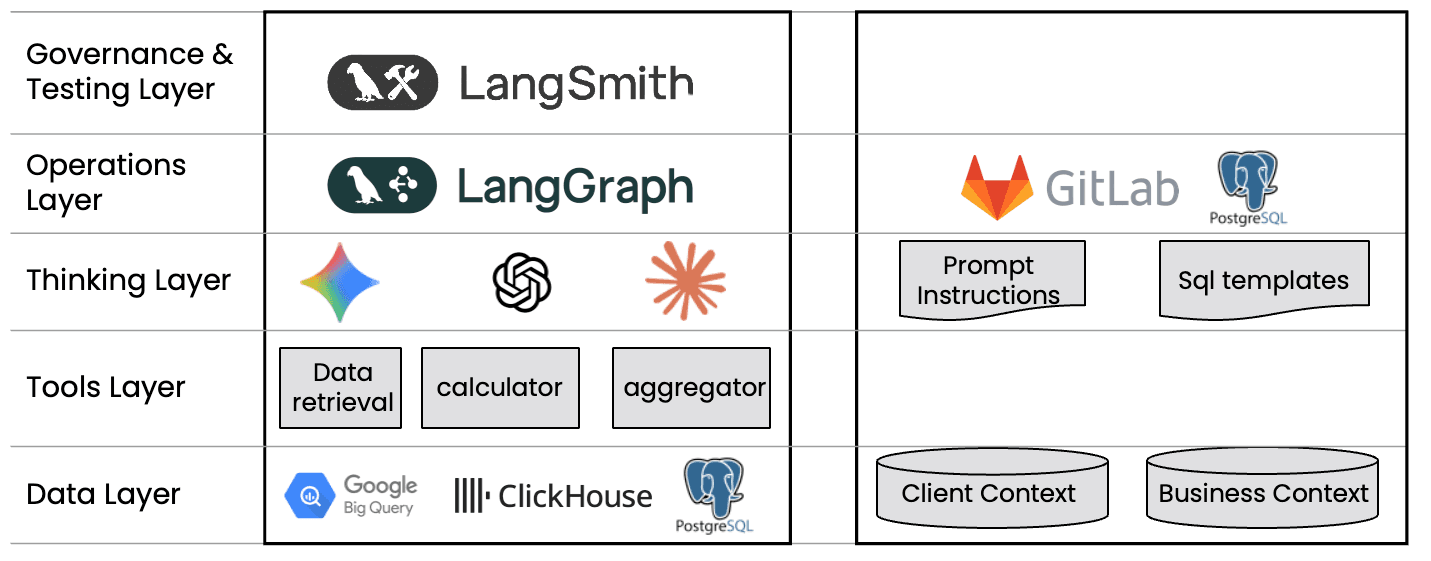

Technology Stack

Built on proven, auditable infrastructure

Every component in the stack is chosen for reliability, observability, and enterprise readiness. No black boxes — every agent action is traceable via LangSmith, every query is logged, every computation is reproducible.

Integration Patterns

Fits into your existing stack

API Access

RESTful API for programmatic access. Trigger analyses, retrieve results, and integrate Nandu outputs into your existing workflows and dashboards.

POST /api/v1/analyze

{

"query": "Compare ACOS across DE campaigns",

"assistant_id": "your-assistant-id"

}Data Source Connectors

Out-of-the-box: Amazon Advertising, Seller Central, Vendor Central, DSP, AMC. Custom: any SQL-accessible data source, REST APIs, or file-based imports.

Supported:

• Amazon Advertising API

• Seller Central / Vendor Central

• DSP & AMC

• Any SQL-accessible sourceOutput Formats

Chat interface, scheduled reports (email/API), PDF export, CSV data export. Structured JSON responses available via API for downstream processing.

Formats:

• Chat (real-time)

• Scheduled reports (email/API)

• PDF / CSV export

• JSON via APISSO & Identity

SAML and OIDC support via Clerk. Integrate with your existing identity provider for seamless single sign-on.

Protocols:

• SAML 2.0

• OIDC

• Clerk-managed SSOTechnical FAQ

Can we use our own LLMs instead of OpenAI?

Yes. Enterprise deployments can use Azure OpenAI, AWS Bedrock, or self-hosted models. The agent architecture is model-agnostic — only the LLM endpoint configuration changes.

How is client data isolated?

Each client has separate database credentials and isolated data access. The agent system uses per-client configuration at runtime. No shared data stores, no cross-client queries.

What happens if the AI produces incorrect results?

Critic agents validate every output before delivery. LLMs are restricted from performing calculations — all computation runs through deterministic tools (SQL, Python). Results include traceable reasoning steps for manual verification.

Can Nandu connect to our existing data warehouse?

Yes. Nandu supports BigQuery, PostgreSQL, and ClickHouse natively. For other DWH systems, we build custom connectors via SQL or API. Your data stays in your infrastructure.

What is the typical implementation timeline?

Standard Amazon analytics: 1-2 weeks from kick-off to live. Custom DWH integration: 4-8 weeks including data mapping, agent configuration, and quality validation.

Do you have a security questionnaire or documentation package?

Yes. We provide a pre-filled CAIQ (Consensus Assessments Initiative Questionnaire), our ISO 27001 certificate, data processing agreement (DPA), and technical architecture documentation. Contact us to request the package.

Have a technical question that's not here? Let's talk.

Ready for a technical deep dive?

Our team is happy to walk through the architecture with your IT and security teams. We'll answer every question.